Control systems?

Goal of the day is exploring the control systems and its main mechanics, but do it all with words. Words? Usually when trying to learn this particular subject there are a lot of equations thrown at me. Each of those is important, and maybe it's not possible to explore control systems without them. However, the goal is to do just that. Explore everything there is about control system theory, but without math. What is a system control theory?

Imagine a way to make something automatically control itself without you having to look at it. Let's say air conditioning in the room. There is a thermostat that tracks the temperature in the room, and depending on if it's above set temperature, it will turn on the air conditioning system, and if it's under set temperature it will turn of the system.

Same way with a cruise control system in the car. It will add speed if it's underneath set speed, and if above, it will wait for car to slow down.

And last, in our body there is a system that controls blood glucose level, if it's too high it will lower it by adding insulin, and if it's low it will increase it. We don't have to think about the system, we don't even know it's there, but it works and it works well.

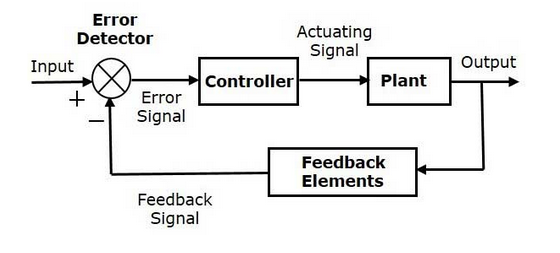

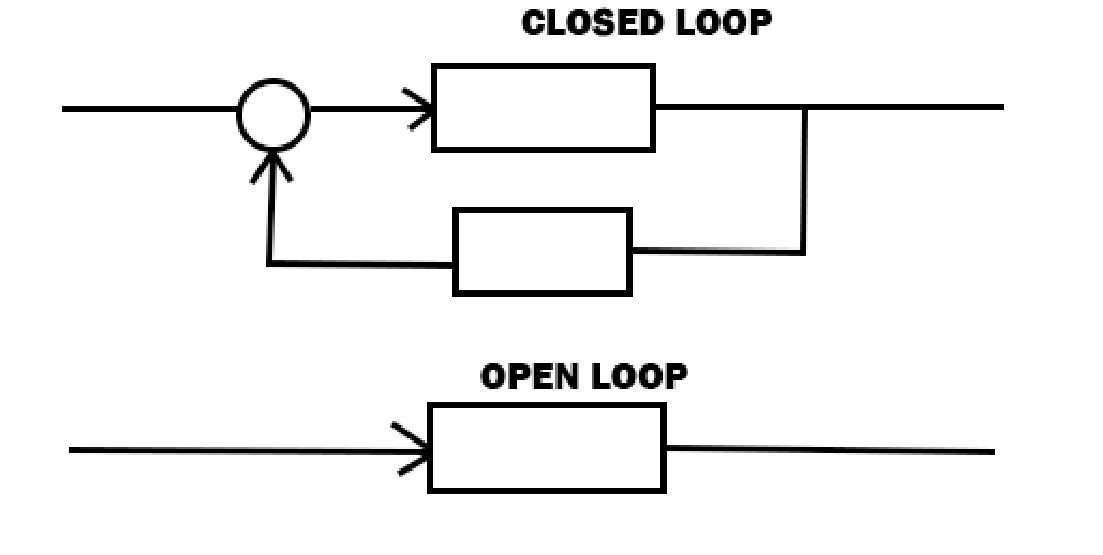

Every system that can self sustain works on the same principle. First when someone taught me about control systems I couldn't figure out what it is. Is it a way to make a robot that can somehow self sustain itself? Is it a way to create self-driving cars? Well it's not exactly that. The point is to break a complex subject to it's base principles, and then apply control systems, or look for them. For example a cruise control is one system of a self-driving cars, another one is proximity sensors, another is motor voltage control. So the main point is that automating something always follows the same principal, and it can best be described with following picture.

Input is the value the system needs to follow, for example desired temperature in the room. Controller is a controller of a system, a small computer in air condition system. Plant is the system itself. Feedback element is the thermostat that tracks the current temperature. And that circle with X in it is where we subtract desired value from current value, and we get the error value, or in other words, how much we are off.

Let me make another example of this picture. There is a restaurant and it has 4 waiters. If the restaurant is empty, it's not required for all 4 of them to be present. But the possibility is that it might get crowded in there at one point. The Input might be that one waiter is responsible for two tables. The controller in this case is a restaurant manager. Plant are all the waiters. Feedback element is a number of currently occupied tables. Therefor if at one point there is one waiter, and 3 tables are filled, the manager can call another waiter in so that all the tables can be served.

I can get one more example. It's actually from a book Feedback Control for Computer Systems by Philipp K Janet. Mind you it's not an exact quote, it's more of an interpretation by me. Let's say an online game has server to which players connect when playing that game. And the game has 10 servers that all don't need to work at the same time because one server can contain 500 players. As an input we might say exactly that. 500 people can be on one server, and as a controller is some kind of system that can turn on the servers. Plant is simply all the servers. The feedback element is a player count. If we detect that there are 600 players, and we subtract feedback info from input info. We know that there is too much people for one server, therefore we can spin up another one. Of course there would be some kind of regulation in place that will try to predict if player count is rising fast, so that it can spin up the server before the overflow happens. It all seems straightforward, but if one wants to implement it properly, it's everything but straightforward.

Right now I'm at a bad spot, because I know the road ahead contains a lot of confusing math, but reader might not know that. That's a problem, because someone might now believe me and instead of going straight into theory I will say a couple of words about history. The history of system control. There is a great resource to read about that topic and here is the link: https://lewisgroup.uta.edu/history.htm It's a small cut from the Frank Lewis book called Applied Optimal Control and Estimation: Digital Design and Implementation.

It introduces how system control came to be, and as it turns out it's not one singular moment in history. Actually there is one where J.C. Maxwell, Scottish physicist and mathematician wrote it down. He was the first that translated it into words in 1868. He was the one that started the modern age of control systems by writing it down in a form of math equations. But before that we can go quite a while back to where is the first account of closed loop control system. About 300 BC Greeks implemented first system that had closed loop control. It best can be described as todays flush toilet system. At the time it was not used to flush toilets, or maybe it was, I don't know. They used it to track the time. They had a container with water that at all times needed to have the same level of water in it. So the droplets of water that were going out of it are consistent, and timed the same. So they can reliably track time.

Right now this post is about 1000 words, I don't think I have necessary expertise to pretend to explain math behind the important parts. However, I have to do at least a little. There are two types of system control. First is closed loop, and second is open loop.

So far I have mentioned only closed loop systems and I will continue with it, so open loop systems will be left behind. However, one example of closed loop system is a washing machine. It has predetermined stages, for example soaking, centrifuge, drying. Usually those are on a timer, and when one ends the next begins, so there is no need for closed loop, because everything is predictable every time. Onwards.

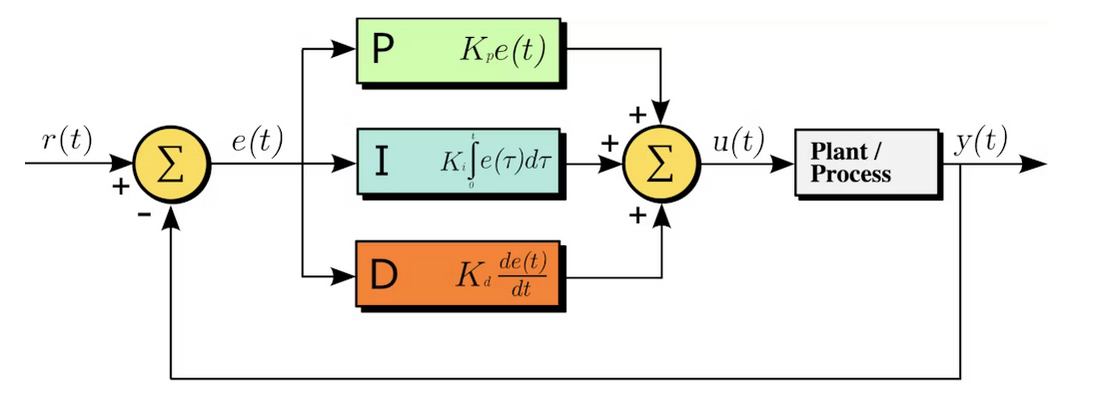

The main thing that concerns us when implementing the closed loop control system in something is the regulator. The regulator is the brain of our system. And when we look at it, the regulator is the only thing we can change. Most common type of regulator is called a PID regulator.

It's a combination of 3 components, and they are proportional, integral and derivative component. It's possible to implement only Proportional so it would be called P regulator, or only Proportional and Integral regulator, that one is called PI regulator. Those are all a bunch of names for a regulator, so what actually are those proportional, integral and derivative components? Proportional or P regulator is the simplest of them. It simply takes the error value (input - output) and multiplies it by some number we set, and it feeds it in our system we are trying to regulate. The problem with P regulator is called Steady state error. It's Because the output is zero only when the error is zero, in practice the system often settles at a point where a small persistent error remains, just enough to sustain the needed output.

To mitigate that we can add integral component, so now we have PI regulator. Integrals serve to add up the area under our function. In our regulator it adds up the error over time and by doing that it knows how much it still needs to add to get to our desired set point value. And if we want to make the system even more stable we can add derivative component aka D component. By doing a derivation at any point in the function we can see the slope of that function in that point. Same way here we can see if our regulated value is going up or going down, and we can see it immediately. That way the regulator can correct it before it even happens.

And that's a weak explanation of PID regulator. I recommend book called Control systems for complete idiots by David Smimth. It's a great short book that explains control systems in depth in an easy to read way. Of course there is some math that's in there, it's just the nature of the subject.

Right now I have explained PID regulator with words, but if we actually want to calculate how our regulator will act. We can try and do it by solving some differential equations, but we won't get far. In order to get anywhere the Laplace transform is used. It's a way to transform functions from time domain to S domain. What's S domain? Well it's god damn complicated ok... I don't know how to explain it, I barely know of it. There is a way to transform functions from time to s domain just by using the pre solved common block, and that's how it's usually done. So in a way we can use math just as a tool that we don't know anything about. I'm not saying that's a good thing. It's far from good, but as a start it is comforting.

It's a complicated subject, however it does not have to be. If for example we want to implement the PID controller in some kind of device. We can do it with Arduino microcontroller for example. It's not that hard to implement it in code, I have made a post about programming those in Arduino IDE: https://www.fallingdowncat.com/pi-regulator-in-code/

Thanks for reading.